Modern computing is increasingly split between closed, managed platforms and open-source systems that prioritize transparency and user control. As proprietary ecosystems grow more complex and restrictive, open-source alternatives continue to challenge the assumption that users must trade control for convenience. At what point does software stop being a tool and start becoming a controlled environment shaped by external interests? How much of modern computing is still truly owned by the user? And can open-source realistically redefine the balance of power in everyday systems?

A Growing Frustration – The Downfall of Modern Platforms

The rise of Linux is no secret, and its growth continues to accelerate across all industries. From servers to IoT devices, open-source solutions have proven themselves to be reliable, efficient, and secure alternatives to proprietary systems.

But the shift away from traditional platforms is more than just about functionality, it’s now becoming about how we use computers, how we interact with technology, and most importantly, who gets to decide what our machines do.

The growth of Linux in recent years starts off subtly; small annoyances that stack up over time. Forced updates that interrupt work, settings that move around without warning, familiar workflows that quietly break.

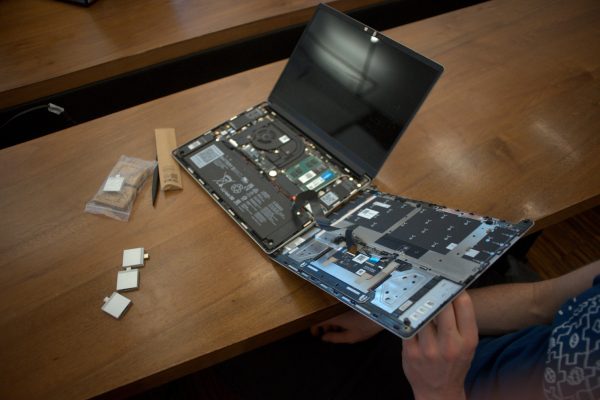

As modern proprietary operating systems have continued to update, hardware restrictions such as TPM 2.0 requirements have prevented many older, yet technically capable, machines from being eligible for upgrades. These requirements are often perceived as arbitrary by users, as the affected hardware can still support most everyday workloads (this has actually been proven with users successfully bypassing the requirements and getting 10+ year old systems to boot modern builds).

The interface itself shifts away from efficiency, more layers, more abstraction, and far less clarity. Instead of form following function, the system increasingly prioritizes visual uniformity, and, as a result, workflows that were once straightforward are now disrupted by additional abstraction and buried settings.

Control panels disappear, system settings hide behind simplified menus that guide you only where the designers want you to go, and when you try to take control, you find yourself stuck in a maze of options that don’t actually let you change how the system behaves.

AI becomes unavoidable, thrust into software that was never asked for (see how AI assistants were added to basic system apps such as Notepad), and system-level AI embeds itself directly into the OS. While these tools are marketed as productivity features, their integration is tightly coupled with the operating system and can be difficult to fully disable.

This raises concerns for users who prefer to minimize AI-driven features or maintain a greater degree of privacy and autonomy. Local computing is now starting to look more like cloud dependency, features that once lived on your machine now rely on remote services.

This also introduces additional problems, especially how data exposure increases, privacy control fragments, leaving you navigating a system designed to collect information about you. Furthermore, background systems expand, usage patterns become harder to track, and optimization tools begin to resemble behavioral tracking.

Making matters worse, advertising finds its way into system surfaces, suggestions and promotions that blend into the OS, making it difficult to separate the machine from the marketing. Privacy controls do exist, yes, but they’re scattered, inconsistent, and often require digging through multiple layers of settings just to find what you’re looking for.

Updates move from optional to required, and the timing, frequency, and behavior becomes something outside your control. Trust becomes a default setting where you are expected to accept opaque systems you can’t fully audit.

In the end, the machine stops feeling personal, and starts feeling like an endpoint inside a larger managed ecosystem, controlled by someone else, somewhere else. And that is where the real frustration builds; the system itself doesn’t belong to you anymore.

How Linux is Slowly Marching Forward

Unlike many proprietary platforms, Linux adoption has increased gradually over the years and continues to grow. The motivation behind Linux and its many distributions has often come from a desire for greater control, transparency, and freedom from restrictive software ecosystems, and that motivation is still present today, remaining as one of the core reasons people continue to switch.

Over time, Linux distributions have also become much more user-friendly, significantly lowering the complexity for installing and use. Distributions such as Ubuntu and Pop!_OS have helped to make this transition easier, and installation of these systems now has the same level of simplicity as installing other competing operating systems.

From these distros, user-focused systems have emerged, providing familiar workflows without the heavy restrictions of proprietary environments. Hardware support for Linux distros has also improved substantially as vendors and communities have continuously worked together to close driver gaps. (NVIDIA has historically been one of the more difficult areas for Linux users, but its approach is now shifting toward better driver support).

Documentation is another area that has become far more accessible, along with knowledge sharing and support networks having expanded significantly. In fact, even tools like ChatGPT have become highly beneficial in debugging and providing help with users when trying to navigate the dreaded terminal window.

But probably the single most important change that Linux has experienced in the past few years is the public perception of Linux itself.

Once seen as a niche, hard-to-use platform, Linux has become a viable alternative for everyday computing. A major turning point for Linux came when Steam (Valve) introduced major gaming compatibility solutions (via Proton), allowing many popular Windows games to run on Linux-based systems. This has massively challenged the idea that Linux is only suitable for technical users and applications, encouraging more users to switch over.

But when compared to proprietary operating systems, Linux shows consistent performance advantages, with systems running more efficiently without background overhead or forced services (CPU and RAM usage is almost always lower on Linux machines). Privacy also stands out as a foundational feature, with no embedded telemetry, no hidden data pipelines, and more control consistently placed in the hands of the user. These traits remain central to its appeal today.

Government agencies and educational institutions have also explored and, in some cases, implemented Linux-based systems to reduce dependency on proprietary vendors and improve operational sovereignty (for example, the French government has moved entirely to Linux distros). With developers continuing to build commercial products and services around Linux foundations, Linux has moved from the edge toward the center, steadily reshaping the computing landscape.

Why this Demonstrates The Power of Open-Source

This move away from traditional platforms towards open-source designs very clearly demonstrates how open-source changes the relationship between users and the systems they rely on. Because open-source exposes the full system, this means that trust is no longer based on branding or marketing, but instead, based on what the system actually does.

Open-source development is also a highly public process, where problems are handled in full view. Because of this, security rapidly improves as everyone has access to the inner workings, and no one can hide backdoors or undocumented features that might compromise privacy or integrity.

Having said that, there have been numerous times where contributors have managed to insert malware / backdoors hidden as features (see XZ Utils Backdoor), but these cases are often rare and caught quickly.

The very idea of open-source also changes how innovation works. Since code and deigns are openly shared, ideas can be reused and new projects built on the foundations of others, which results in compound organic tech growth. Forking projects becomes a legitimate option when direction or strategy no longer match user needs, and projects that diverge can thrive independently, allowing these forks to become hyper-specialized in their respective application.

The net result is a software ecosystem that’s becomes highly responsive, driving serious development. Even corporate involvement in open-source projects is now growing (as these very corporations may be dependent on open-source solutions), helping to further improve solutions with money-backed engineers.

Thus, what we see is that open-source projects become a balancing force, pushing back against centralized control in software ecosystems. Of course, projects still require funding and support, but the model evolves away from top-down ownership toward collaborative development models that value community input equally with corporate resources.

Long-term, this creates a fundamentally different kind of software ecosystem. Systems that are transparent, accountable, and built on a foundation of public collaboration. Projects that are less dependent on a single company’s survival or strategic direction, and more resilient to sudden shifts, acquisitions, or internal restructuring. Open-source also gives users structural leverage, the ability to migrate, modify, or fork if commercial options no longer serve their needs.

What Will the Future of Engineering Look Like?

If the past few years have taught us anything, it’s that engineering is absolutely moving toward a more decentralized future. The push-back against closed platforms, the rise of open-source alternatives, and the growing demand for control and transparency all point to a changing landscape where software ecosystems will no longer be able to dictate terms to users.

Linux is already the default baseline environment for many industries (dominating the server market and all supercomputers on the planet), and as hardware continues to converge, standardizing tooling across sectors will become even more important. From medical devices to automotive systems, from industrial control to edge computing, the underlying architecture will likely move closer to a single, unified Linux-based framework (especially with the rise of ISA like RISC-V, which only help the open-source cause).

Now, that doesn’t mean every device will be running Ubuntu or Fedora, but it does mean that the core software stack will share common elements, compatible drivers, and interoperable interfaces. The net result from such an environment could be a future where engineering teams spend less time troubleshooting proprietary ecosystems and more time focusing on application-specific development.

In this world, systems that are standardized, documented, and supported become supported by active communities, rather than by a handful of vendors who may or may not exist in five years (or worse, decide to drop support at a moments notice). In this future, the burden of dependency is shared equally among developers, not borne solely by users.

Of course, this also means that the responsibility for ensuring security, compliance, and performance shifts back to the community. Open-source systems are only as good as the people maintaining them, and as those ecosystems scale, the pressure to maintain high standards will increase exponentially.

Furthermore, the complexity of these systems will grow, and the potential for vulnerabilities to slip through will expand alongside it (as we have seen in Linux and many other open-source offerings). But that’s not necessarily a bad thing. It’s just a simple reminder to all us engineers that engineering isn’t just about writing code or designing circuits anymore, but instead, about building systems that are accountable, transparent, and trusted by default.