While researching Augmented Foam, a 3D augmented reality interface for product design, I came across this great interface project from the The Context-Aware Computing Group at MIT.

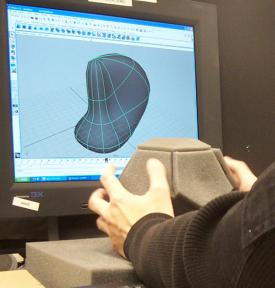

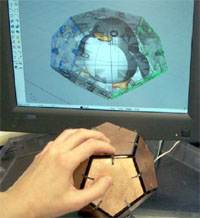

iSphere is an input device for modeling 3D geometries through hand manipulation. This device is equipped with capacitive sensors that can respond the proximity of hand positions to 3D modeling systems. It allows users to manipulate 3D geometries using high-level modeling concepts like push or pull the 3D surfaces. By collecting proximity information between hands and input device.

Making 3D models should be an easy and intuitive task like Free-hand sketching. iSphere is a 24 degree of freedom 3D input device. iSphere is a dodecahedron embedded with 12 capacitive sensors for pulling-out and pressing-in manipulation on 12 control points of 3D geometries.

Jackie Lee, Yuchang Hu & Ted Selker’s experiments show that iSphere saved steps of selecting control points and going through menus and make subjects more focus on what they want to build instead of how they can build. Novices saved significant time for learning 3D manipulation and making conceptual models, but lacking of fidelity is an issue of analog input device.

Traditionally, 3D designers plan and build their concepts in 3D CAD systems. The bottom-up approach limits the diversity of design outcomes during the early design stage. They purpose a top-down 3D modeling approch that allows designers to play and build 3D models and develop their concept directly.

Top down design is available in standard CAD packages such as solidworks, but this interface does look like an interesting approach rather than a mouse, spaceball or tablet and stylus. I can also imagine how an interface like this would make it easier for a novice to modify an existing design, making mass customization of 3D objects a little more accessible.

Check out the video on You Tube, and you can see how they use it like a theremin to model a boot in Maya, Impressive.

1 Comment

i am just waiting for this device to come out!! i am a 3d modelling artist, and i use Autodesk Maya!! If this thing comes out into the market, it will become a really great handy equipment for all of us!!!

Thanx for sharing this info!!! \m/

Comments are closed.